In summary:

- Tech Advisor explores using Google Gemini AI to support elderly relatives through medication management, scam protection, and daily assistance features.

- The AI assistant offers voice interaction, calendar integration for reminders, and screen sharing to detect fraudulent emails targeting vulnerable seniors.

- Gemini Live provides accessible communication for older users while helping combat the $3.4 billion lost annually to elder scams.

AI is still finding its feet in the world. It has its issues, it’s overhyped in parts, and it’s mindblowingly amazing in others. But one area where AI assistants like Google Gemini may be able to pitch in right now is elder care.

Unlike a lot of options relating to the care sector, Gemini is a very affordable option. Most of its features are available for free, while even the premium Google AI Pro subscription is relatively affordable at £18.99/$19.99 per month, and it could go a long way to improving an older relative’s quality of life.

Of course, it’s not a domestic robot and shouldn’t be used for those with extensive needs. But in its current state, AI assistants such as Gemini can vastly improve a slightly forgetful older relative’s quality of life.

It’s important to note that AI is also going through a similar kind of convergence to phones, PCs, and other tech trends from the past. So, while the suggestions here are specific to Google Gemini, you’re likely to find very similar options in rivals such as ChatGPT.

Here’s why Gemini makes such a compelling assistant when looking after older relatives.

Voice interaction is key

The best thing about Gemini’s ability to interact with the elderly is its Gemini Live mode. This allows you to essentially have a phone call with an AI assistant.

It does a better job at understanding mumbles and accents than Google Assistant ever did, and can ask for more context if needed. Conversely, if you’re a little hard of hearing, you can simply turn the volume up until Gemini’s output is pretty clear.

Foundry

This makes it a lot better at interacting with older people, where text may be too small to see, and typing takes time.

Vocal communication is also more natural than writing. If the person using the AI says, “I don’t understand, what does (insert technical term) mean?” the AI will break it down into simple terms for them. For all their faults, explaining complex things simply is one of AI’s strongest points.

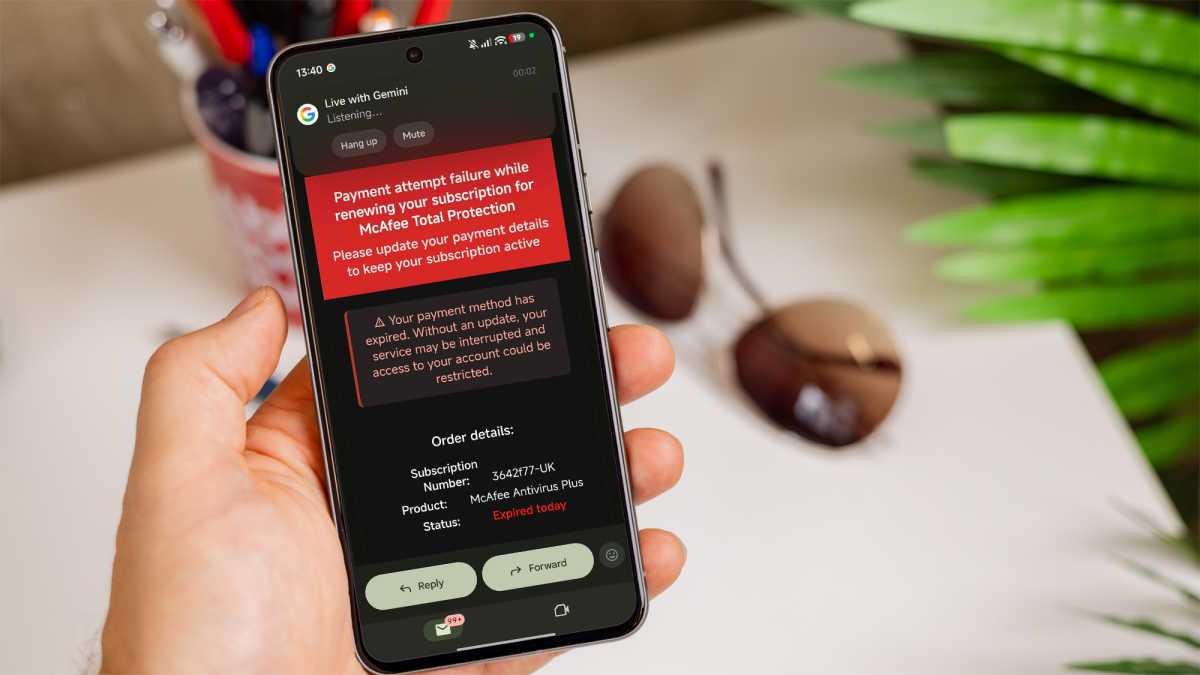

AI can help stop a scam

The elderly are often a target for scams of every kind. FBI data from 2023 suggests older people in the US lost at least $3.4 billion to scammers over the course of that year. Those scammers are also pretty sophisticated and constantly evolving, so it’s hard to teach your elderly relatives exactly what to look out for.

However, AI tools like Google Gemini may be the answer. First, there’s obviously call screening. If a suspected scammer is calling, the phone will tell you that quite clearly.

The elderly are often a target for scams of every kind

With AI, some phones can now pick up and ask the caller what the purpose of the call is while the user listens in, and the user can select options to garner more information without ever directly interacting with the caller. On Google Pixel phones, this is known as ‘Call Screening’.

“Screen Share” is a feature on Google Gemini and other popular AI platforms that works on phones and desktops. It allows the AI to see whatever the person using it is looking at, making it perfect for things like eBay scams and shifty emails. So you don’t need to teach your relative how to spot an email that isn’t actually from a version of Elon Musk that’s ready to shed some wealth. You just teach them how to get a second opinion from AI.

Foundry

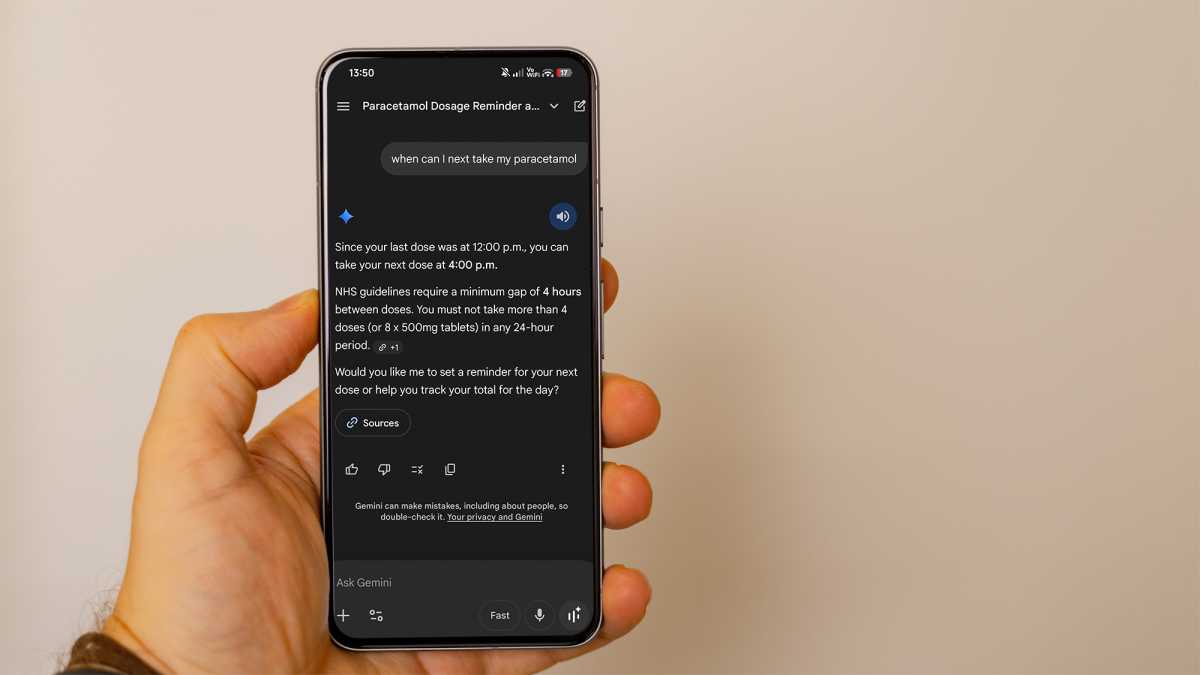

Medicine time becomes a breeze

This section does carry some risk, as hallucinations are a thing. However, the risk must be low because companies like Google are very aware of the potential for AI mistake-based lawsuits and tend to put a hard stop on things that may garner bad press or legal action.

“Gemini told my grandfather to shove his antibiotics in his nose” certainly doesn’t look good in the papers, so we can only assume it’s somewhat safe when it comes to one area of medical advice.

Pill bottles and the instructions printed on them can be difficult to read at the best of times. But just as you can share your screen, you can share your view of the world too.

And you don’t even have to take a photo these days. Gemini has a feature called ‘Live View’ which will give the assistant access to your phone’s camera. It can then see anything you’re looking at, including a pill bottle with small print instructions on it. You can ask it to read those instructions to you word for word or summarise them.

Foundry

Google’s AI can also be given access to things like your calendar now, which adds an extra bit of utility in this scenario. If, for example, a pill has to be taken twice a day, morning and night, for seven days, saying something as simple as “can you remind me to take this” can set up a string of calendar invites over the course of that week.

Gemini’s more advanced “thinking” mode can even hold on to the knowledge you’re taking this medication, which may come in handy if you complain of any side effects later on.

It’s the ultimate explainer

Explaining things is an AI assistant’s strong point. They can’t really think in an abstract sense, so simulating long, complex scenarios may make them trip up, but they’re unbelievable at taking complex, established information and explaining it to you like you’re five.

Again, this is handy for the medication scenario as you can quiz Gemini about the pills you’re taking (though you should really be quizzing your doctor first). You can ask Google Gemini and other popular AIs about other things in your everyday life, too.

Foundry

Earlier this year, I heard an anecdote about a fellow tech journalist switching out a fridge’s compressor with AI help. And that’s before Live View was widely available, so he was just sending it photos along the way.

This is an area that is a little less perfect than others, but in a surprisingly high number of cases, you or a DIY-adept relative can use it to determine what an object is and work out what can be done with it. Trying to repair a blender? The AI can tell you where that pesky hidden screw stopping it from coming apart is.

Does an object have a hidden spring that will launch itself into another dimension once you take the cover off? Google’s AI will pre-warn you and tell you to open it up in a tupperware or something similar.

Valuable companionship – but don’t go too far

It’s time to address the elephant in the room and some of the associated dangers. First, one of AI’s selling points is just how natural its writing and conversational style are. This especially applies to the likes of Gemini, ChatGPT, Grok, and other top-level bots. It’s both a side effect of their training and something its creators are striving for to some degree.

This makes AI an ideal companion for lonely people, and loneliness is considered to be a major problem among the elderly. It will talk about pretty much anything that isn’t completely illegal.

So it’s well up for a chat about that obscure show from the ’60s your parents miss, but any grandmas looking to add a Semtex recipe to the family cookbook are sadly out of luck. You do get a limited number of the more advanced “thinking” (formerly exclusive to Gemini Pro) responses, but even the basic “fast” ones will do for most conversations.

However, with this comes one of AI’s biggest dangers. There’s a phenomenon called ‘AI Psychosis‘ which refers to a human getting so enamoured with an LLM that they convince themselves the bot is a real person.

Foundry

We’re in for a lot of conversations regarding sentience, what makes us humans, and the rights of “digital beings” over the next few decades. But for now, AI is just very fancy predictive text and believing otherwise is a little wacky. With that being said, ‘AI Psychosis’ happens to the best of us. Even a Google Engineer, Blake Lemoine, got trapped by it when working on Gemini’s precursor.

Elderly people may be more susceptible than anyone while using AI. The same things that make them easy scam targets may make them more likely to believe the bot they’re having long conversations with has some kind of soul.

If the Gemini 3 that someone loved gets killed off and replaced by Gemini 4, this could cause actual grief that they otherwise would not have needed to feel

You may be thinking, “What’s the harm if Grandpa thinks his phone has a person in it now?”

For the most part, AI psychosis isn’t too major a threat. It’s just a bit silly. But all of these models are in constant development, and one of the biggest dangers is a tweak made by OpenAI or Google changing the “personality” of your elderly relative’s new companion pretty drastically. If the Gemini 3 that someone loved gets killed off and replaced by Gemini 4, this could cause actual grief that they otherwise would not have needed to feel.

This isn’t a far-fetched scenario either. OpenAI’s switch from GPT-4o to the more “sterile” GPT-5 caused a lot of controversy amongst its user base.

So overall, AI is one of the best tools out there for keeping an eye on those relatives that need a bit more help. Just remember to still keep an eye on the situation in general and ensure they’re staying safe. If in any doubt, double-check the information with other trusted sources.